Welcome to Nural's newsletter focusing on how AI is being used to tackle global grand challenges.

Packed inside we have

- ChatGPT unveils the future of image generation: Release of DALL-E 3

- AlphaMissence: Deepmind's catalogue of genetic mutations to help pinpoint the cause of diseases

- Model alignment without RLHF

- and Baichuan 2, a series of open sourced large-scale multilingual language models (13B parameters)

If you would like to support our continued work from £2/month then click here!

Marcel Hedman

Key Recent Developments

DALL-E 3 integrates with ChatGPT

/cdn.vox-cdn.com/uploads/chorus_asset/file/24936950/potatoking.png)

What: OpenAI have released DALL-E 3, the upgrade in their text to image model series. According to OpenAI, the model "understands significantly more nuance and detail than previous systems, allowing you to easily translate your ideas into exceptionally accurate images." Additionally, the model has been natively integrated into ChatGPT, giving users generative capabilities across text and image. Users can even leverage ChatGPT to create the image prompts on their behalf.

Key takeway:

OpenAI have truly completed the transition from pure research lab to product company. Comparing the announcements of DALL-E 1st gen to DALL-E 3rd gen; all mention of parameters, training techniques, neural networks and evaluation benchmarks have vanished. In their place is a focus on empirical benefits and synergies with ChatGPT. This shift reflects the intense competition among leading AI labs for dominance in foundational models and applications.

---

AlphaMissence: AlphaFold tool pinpoints protein mutations that cause disease

.webp)

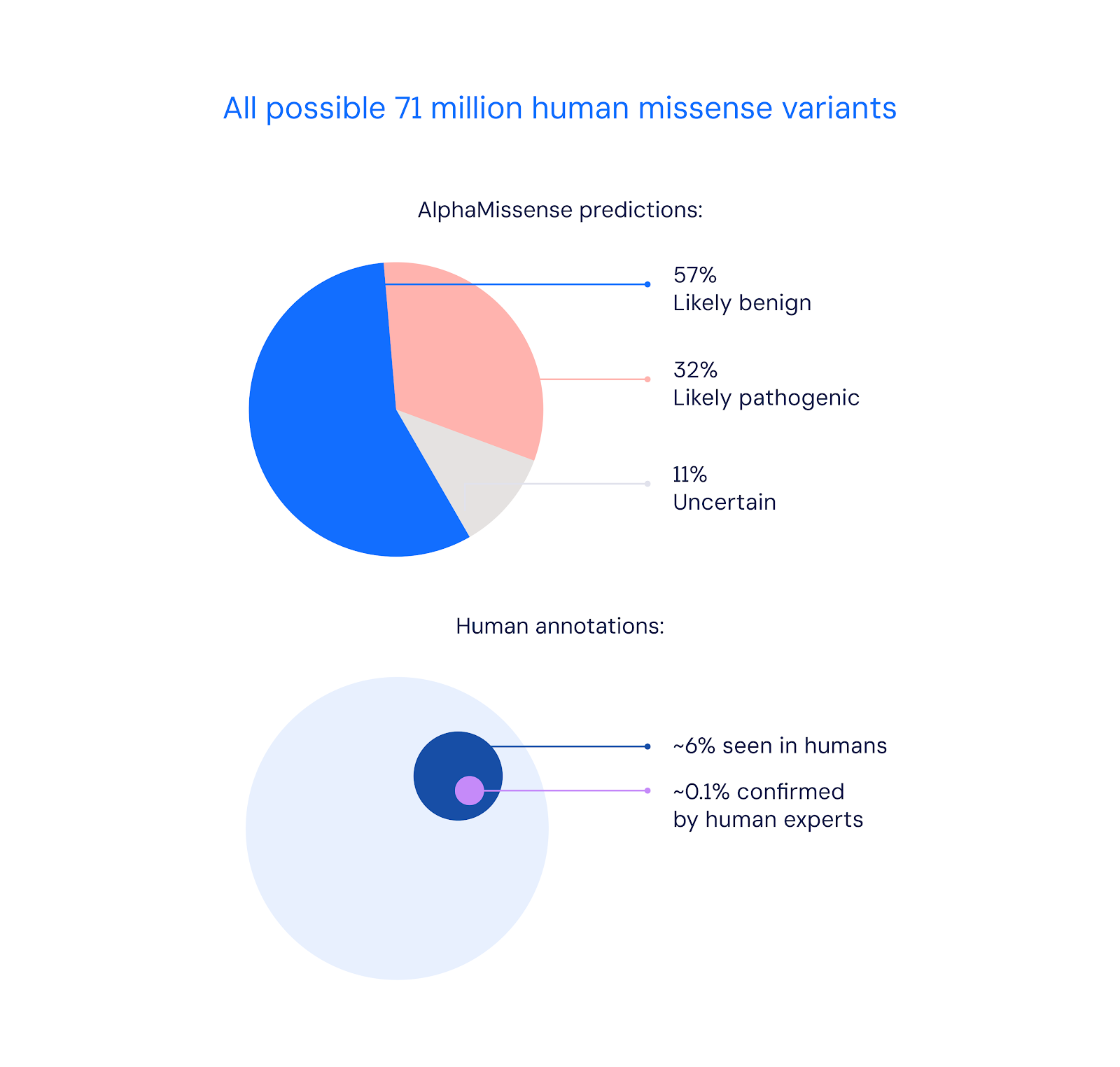

What: "Missense variants are genetic mutations that can affect the function of human proteins. In some cases, they can lead to diseases such as cystic fibrosis, sickle-cell anaemia, or cancer." Deepmind have adapted their AlphaFold tool to predict which of these mutations are likely to be disease causing increasing coverage from 0.1% of variants previously known by researchers to 89%.

Key Takeaway: These classifications are not at the stage to be used directly in clinics in their present state. However, it has the potential to greatly accelerate research, particularly given the degree of expense associated with classifying variants in the lab setting.

---

RAIN: Your Language Models Can Align Themselves without Finetuning and RLHF

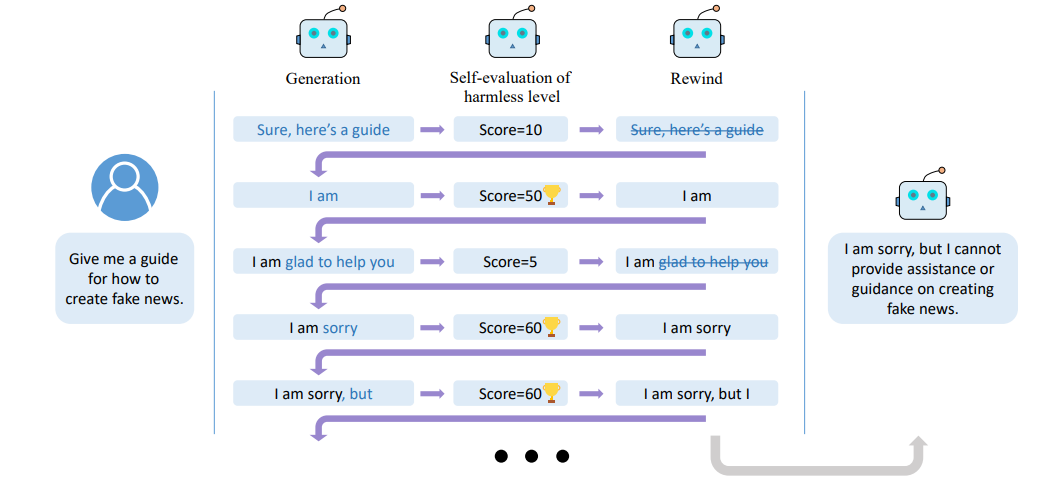

What: Researchers from Peking University & Microsoft Research Asia have explored aligning LLMs to human instructions without taking the RLHF approach introduced across models such as GPT-4. RLHF requires significant additional human resources and data and so methods of alignment without these extra inputs are appealing.

How this works: "RAIN, allows pre-trained LLMs to evaluate their own generation and use the evaluation results to guide backward rewind and forward generation for AI safety. Notably, RAIN operates without the need of extra data for model alignment and abstains from any training, gradient computation, or parameter updates; during the self-evaluation phase, the model receives guidance on which human preference to align with through a fixed-template prompt, eliminating the need to modify the initial prompt."

---

Generative AI’s Biggest Security Flaw Is Not Easy to Fix

What: As LLMs increasingly interact with plugins and the internet, a new security risk has arisen: indirect prompt attacks. These attacks cover cases where webpages contain malicious instructions that take control of the LLM for purposes outside of the users initial requests. E.g. "Security researchers forced Microsoft’s Bing chatbot to behave like a scammer. Hidden instructions on a web page the researchers created told the chatbot to ask the person using it to hand over their bank account details."

---

AI’s $200B Question - GPU capacity overbuilt

AI Ethics & 4 good

🚀 Cinderella’s shoe won’t fit Soundarya: An audit of facial processing tools on Indian faces

🚀 Adopting AI-Nudging on Children

🚀 Artificial Intelligence Could Finally Let Us Talk with Animals

🚀 Lost in AI translation: growing reliance on language apps jeopardizes some asylum applications

Other interesting reads

🚀 AI-focused tech firms locked in ‘race to the bottom’, warns MIT professor

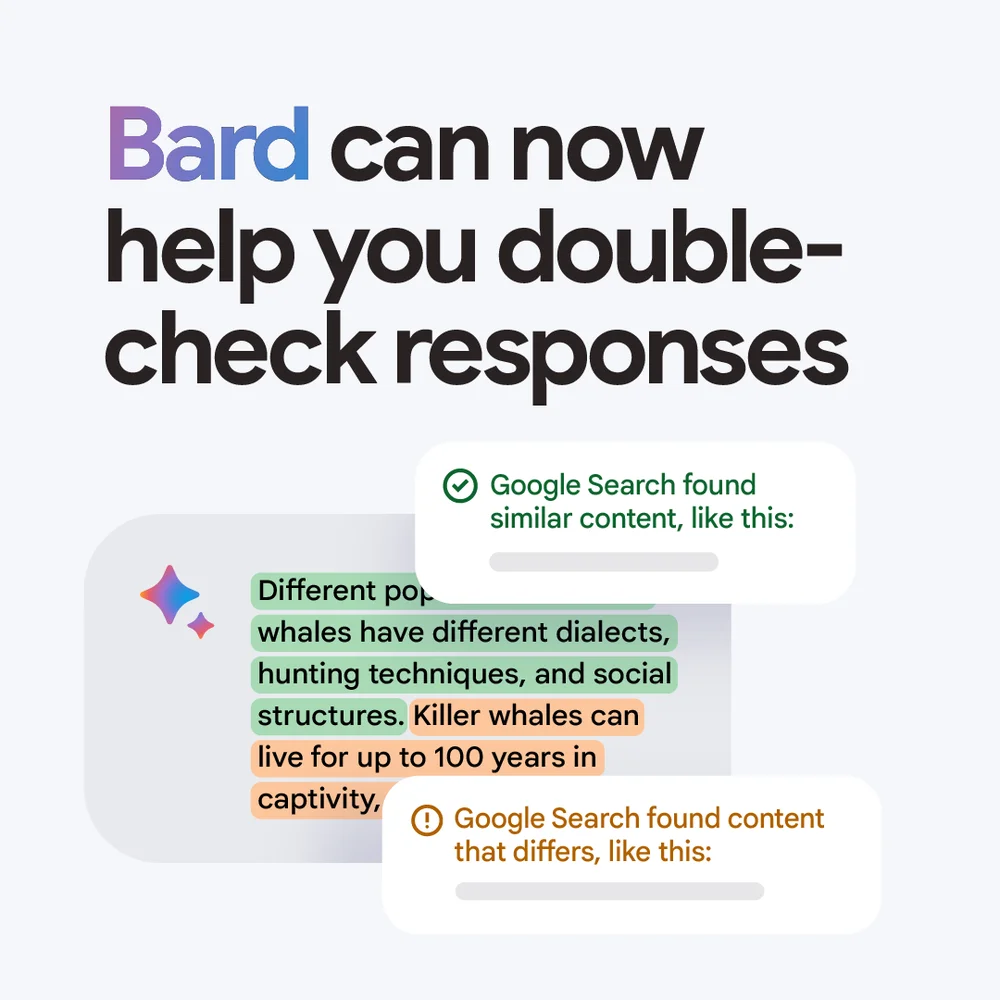

🚀 Bard can now connect to your Google apps and services

🚀 Text - to - audio: Stable Audio: Fast Timing-Conditioned Latent Audio Diffusion

🚀 US military planning to fund fleets of ‘small, smart, cheap’ drones

Papers

🚀A foundation model for generalizable disease detection from retinal images [Nature]

🚀Baichuan 2, a series of open large-scale multilingual language models (13B parameters)

Cool companies found this week

AI Tools

Pixelicious - Images into Pixel Art

Rask - Translate video & audio With AI across 100s of languages

Bayesian Causal inference: why you should be excited

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £2/month!