Welcome to Nural's newsletter focusing on how AI is being used to tackle global grand challenges.

Packed inside we have

- NExT-GPT: Moving to multimodal inputs and outputs

- Amazon to invest up to $4 billion in AI startup Anthropic

- a dataset of 1 million real world conversations between 25 SOTA LLMs

- and New Bayesian Deep Learning library for scalable BNNs

If you would like to support our continued work from £2/month then click here!

Marcel Hedman

Key Recent Developments

Amazon to invest up to $4 billion in AI startup Anthropic

What: In a direct move against Microsoft (+OpenAI), Amazon has agreed to invest up to $4bn in Anthropic with the first tranche starting at $1.25bn. "As part of the investment agreement, Anthropic will use Amazon’s cloud giant AWS as a primary cloud provider for mission-critical workloads, including safety research and future foundation model development"

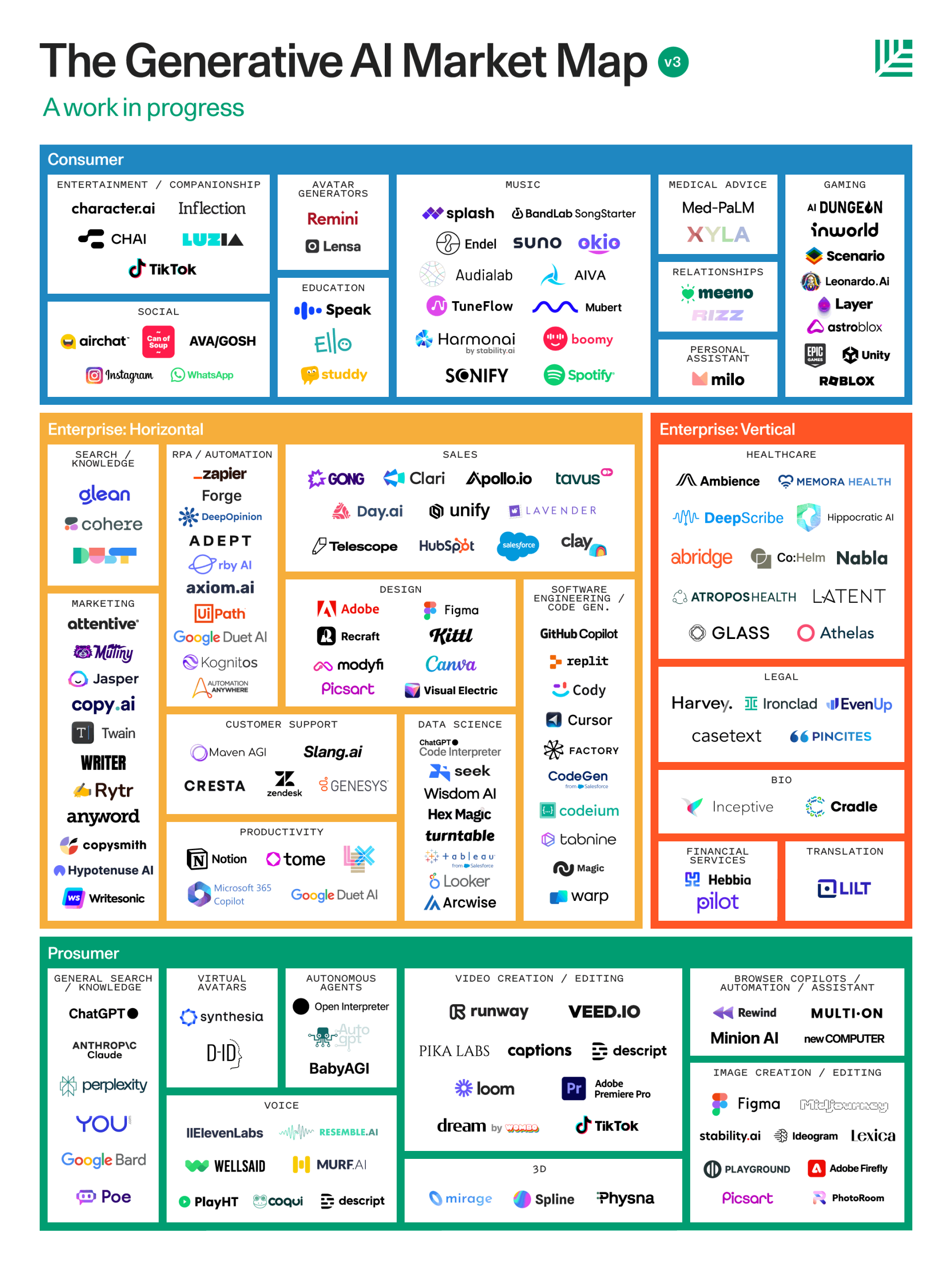

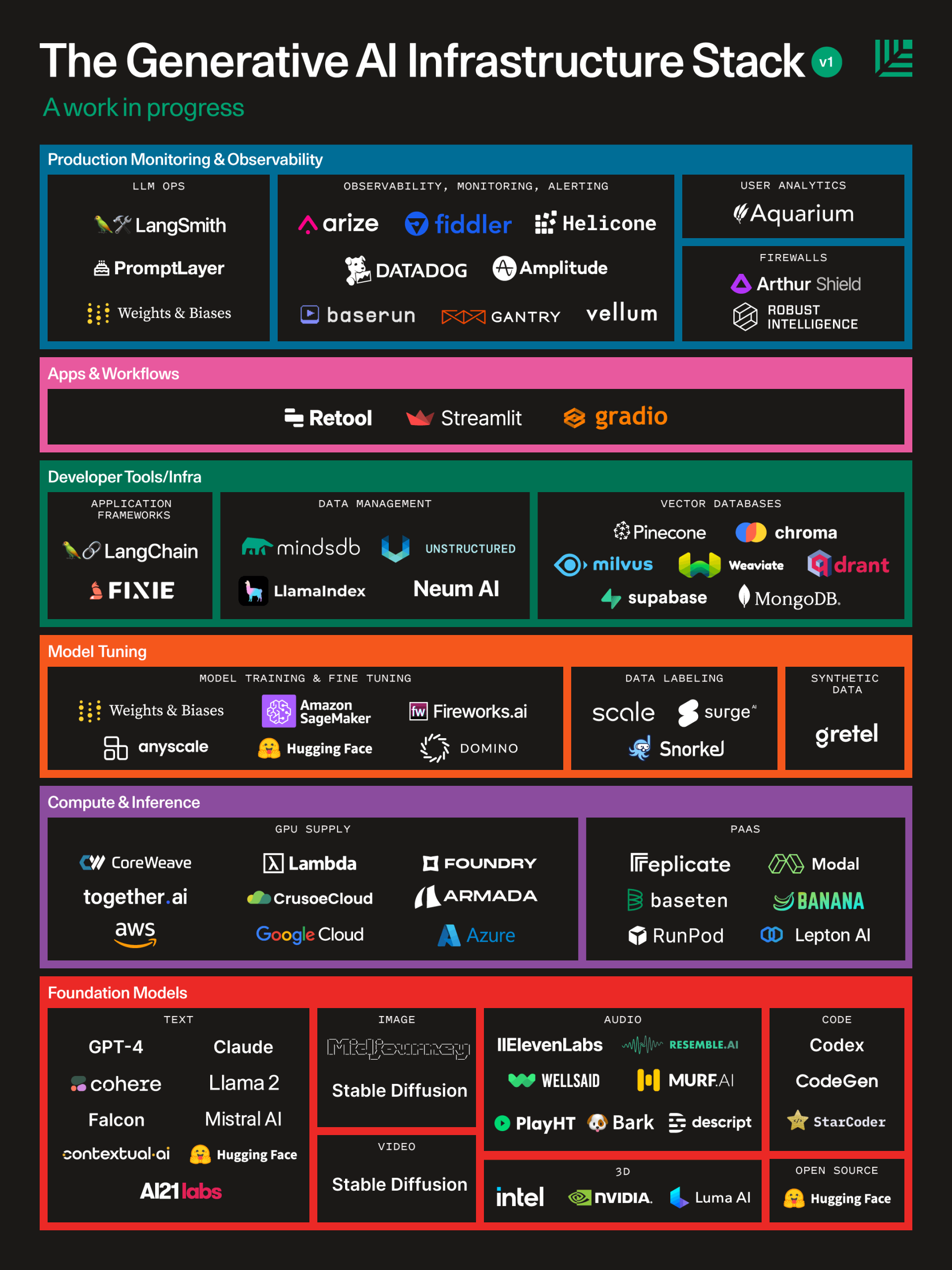

Key Takeaway: Industry research labs are deploying more resources than ever before to claim dominance at the foundation model layer. So far the bets seem to be paying off, Microsoft has invested c.$11bn so far in OpenAI who have reported annual revenues of $1bn.

With the scale of spending increasing so significantly, will open source continue to remain on par?

Adept AI: Open source release of computer-task focused LLM

What: Adept, the lab attempting to solve natural language interactions with your PC, have open sourced Persimmon-8B.

"It boasts a context size four times that of LLaMA2 and eight times that of models like GPT-3, enabling it to tackle context-bound tasks with greater finesse. Moreover, its performance is on par with, if not surpassing, other models in its size range despite being trained on significantly less data. This exemplifies the efficiency and effectiveness of the model’s training process." - Marktechpost

Key Takeaway: This development is at the intersection of two promising trends: 1) The increased intelligence of smaller parameter models. GPT-4 is reported to have 1.7 trillion making Persimmon >200x smaller. This enables use on smaller devices without the computation prowess to run the larger models

2) Increasing number of open source variants. As large closed AI labs are pouring billions into SOTA foundation models, it appears that the medium to small model landscape will be dominated by open source, task specific variants.

‘Game of Thrones’ creator and other authors sue ChatGPT-maker OpenAI for copyright infringement

What: "John Grisham, Jodi Picoult and George R.R. Martin are among 17 authors suing OpenAI for “systematic theft on a mass scale,” the latest in a wave of legal action by writers concerned that artificial intelligence programs are using their copyrighted works without permission."

Key Takeaway: As regulation is being formed to address data privacy, ownership and distribution challenges around AI use; we are already seeing real world effects. Instances of creators competing for roles against AI generated versions of themselves are becoming more prevalent. How these pioneering cases are settled will have large implications for the future interactions between AI enterprises and creators.

AI Ethics & 4 good

🚀 MIT scholars awarded seed grants to probe the social implications of generative AI

🚀 Third-party AI tools pose increasing risks for organizations [MIT + BCG]

🚀 How an archeological approach can help leverage biased data in AI to improve medicine

Other interesting reads

🚀 LLM Training: RLHF and Its Alternatives

🚀 NExT-GPT: Any-to-Any Multimodal LLM

🚀 Amazon restricts authors from self-publishing more than three books a day after AI concerns

🚀 How Silicon Valley doomers are shaping Rishi Sunak’s AI plans

Papers

🚀Tracking Anything with Decoupled Video Segmentation

🚀LMSYS-Chat-1M: A Large-Scale Real-World LLM Conversation Dataset

🚀OpenChat: Advancing Open-source Language Models with Mixed-Quality Data

- Not all training data is made equally

🚀BayesDLL: New Bayesian Deep Learning Library

Cool companies found this week

Non-Profit

Fast Forward Startup Accelerator - Provides a $25K philanthropic grant, training from seasoned tech nonprofit founders, and mentorship from tech experts.

Climate

Carbon 13 - Venture Launchpad - The Venture Launchpad is the startup accelerator for carbon reduction and removal

Demo of recent video segmentation advancements

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £2/month!