Welcome to Nural's newsletter focusing on how AI is being used to tackle global grand challenges.

Packed inside we have

- Stanford Index: Transparency of AI Models

- Generate images directly in Google Search

- The Techno-Optimist Manifesto - Andreessen & Horowitz

- and Table-GPT: GPT finetuned for table tasks

If you would like to support our continued work from £2/month then click here!

Marcel Hedman

Key Recent Developments

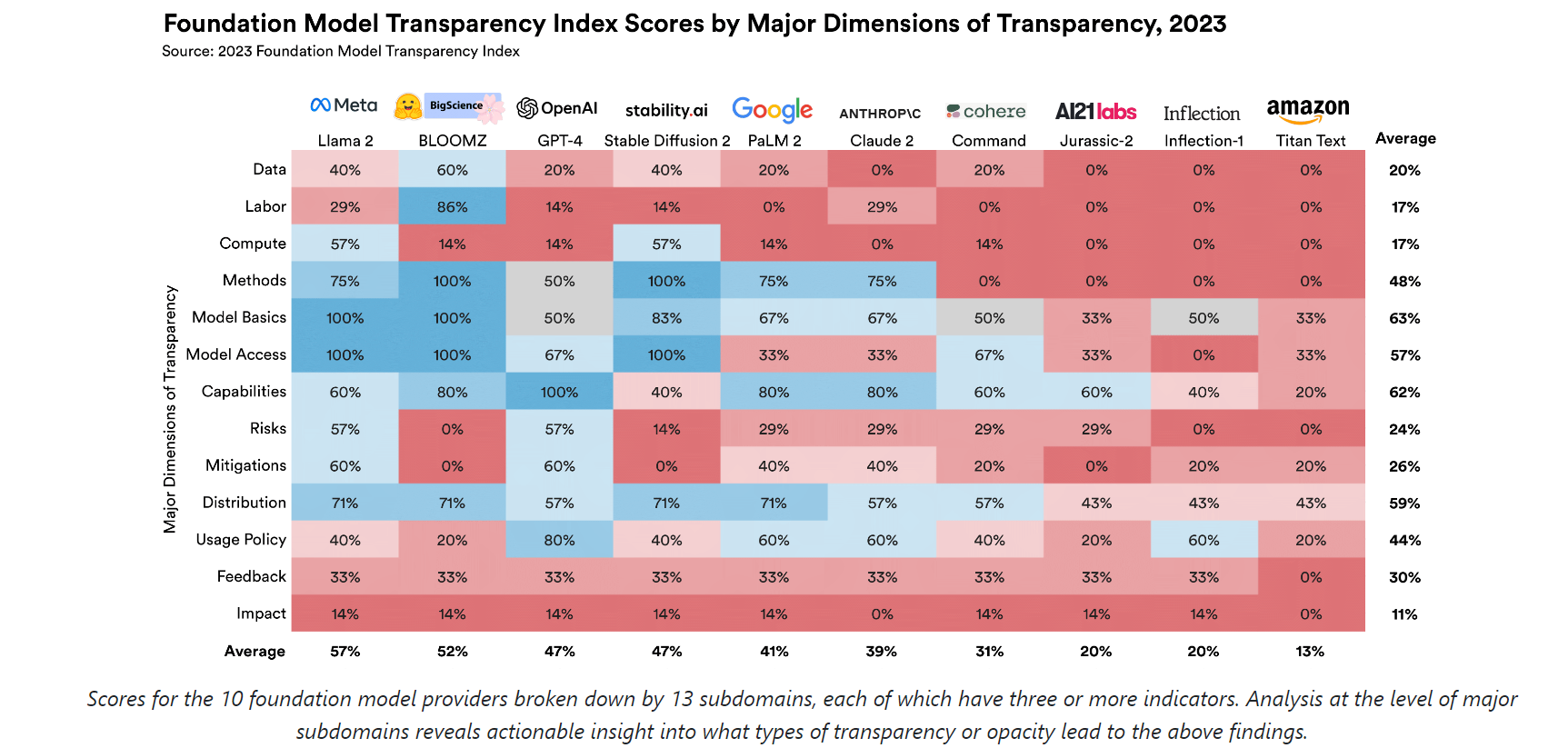

The world’s biggest AI models aren’t very transparent, Stanford study says

/cdn.vox-cdn.com/uploads/chorus_asset/file/13292777/acastro_181017_1777_brain_ai_0001.jpg)

What: A comprehensive assessment of the transparency of foundation model developers has been released and it has been found that there is significant room for improvement across all actors. Performance was particularly low across "Data, labor, and compute. "All developers' scores sum to just 20%, 17%, and 17% of the total available points for data, labor, and compute."

Key Takeaway: Performance measurement is an effective approach to drive improvement and this is exactly what the release of this index can drive. Independent standards such as these have the potential to make impact but that potential may only be realized as the index establishes itself further in the eyes of consumers and regulators.

Mustafa Suleyman and Eric Schmidt [DeepMind/Inflection AI cofounder & Google CEO]: We need an AI equivalent of the IPCC

What: Suleyman and Schmidt argue that in the rapidly developing field of AI, even those building the technology struggle to keep up, leaving regulators with little hope of effectively keeping pace. They suggest then that an independent, expert-led body should be established which is empowered to objectively inform governments about the current state of AI capabilities and make evidence/science-based predictions about what’s coming.

The goal: An International Panel on AI Safety could offer an objective body to help shape protocols and norms

Generative AI in Google Search

What: Google are introducing Search Generative Experience (SGE) enabling you to create images from a text prompt, in what is a direct response to Bings generative capabilities powered by OpenAI. The initial prompts will also be augmented through generative text to create multiple variants of the starting image created (see graphic above).

Key Takeaway: As foundation models increasingly become commoditised, distribution and user access becomes a major lever for AI value capture. Who has more distribution than Google Search with >100,000 searches each second and this movement represents a huge opportunity to introduce generative capabilities to many users for the first time. Thereby owning their present and future experience with the technology.

AI Ethics & 4 good

🚀 Open-source AI firm Hugging Face confirms ‘regrettable accessibility issues’ in China

🚀 Collective Constitutional AI: Aligning a Language Model with Public Input [Anthropic]

🚀 AI Just Deciphered an Ancient Herculaneum Scroll Without Unrolling It

🚀Brain implants that enable speech pass performance milestones

Other interesting reads

🚀 Nvidia thought it found a way around U.S. export bans of AI chips to China—now Biden is closing the loophole and investors aren’t happy

🚀The Techno-Optimist Manifesto - Andreessen & Horowitz

Papers

🚀Table-GPT: Table-tuned GPT for Diverse Table Tasks [Microsoft]

🚀Universal Visual Decomposer: Long-Horizon Manipulation Made Easy

🚀LongLLMLingua: Accelerating and Enhancing LLMs in Long Context Scenarios via Prompt Compression

Cool companies found this week

Foundation models

Baichuan Intelligent Technology - Recently raised $300M from Alibaba, Tencent and Xiaomi. The Chinese company has rolled out numerous LLMs, including four open-source models that have been downloaded more than 6 million times, and two proprietary models known as Baichuan-53B and Baichuan2-53B.

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £2/month!