Welcome to Nural's newsletter focusing on how AI is being used to tackle global grand challenges.

Packed inside we have

- Humanoid robot artist, Ai-Da speaks at House of Lords meeting

- GitHub Users Want to Sue Microsoft For Training an AI Tool With Their Code

- and Generally Intelligent secures cash from OpenAI vets to build capable AI systems

If you would like to support our continued work from £1 then click here!

Marcel Hedman

Key Recent Developments

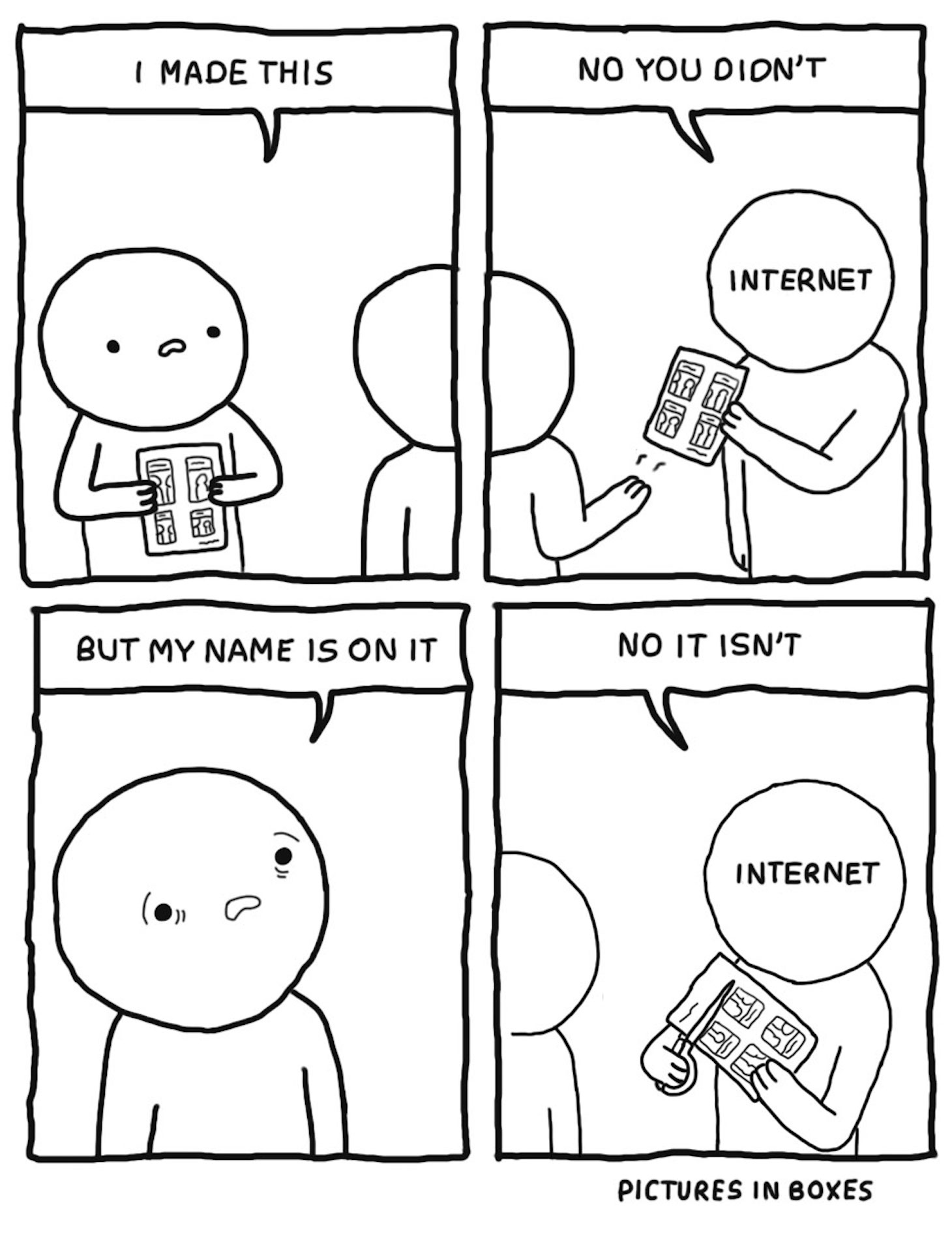

GitHub Users Want to Sue Microsoft For Training an AI Tool With Their Code

What: Github's “Copilot” - a system that autocompletes code as it's being written within an IDE (Integrated development environment) - has come under scrutiny. Code autocomplete is not novel in itself, however where Copilot differs is the mechanism behind it. An AI-powered system created by OpenAI.

The AI system was trained using billions of lines of open-source code hosted on sites like Github. The people who wrote the code are not happy.

They've begun investigating what a class action lawsuit would look like after believing the use of their Github open source data has violated the accompanying licenses.

Key Takeaway: There is a trend towards increased accountability between commercialised generative models and the creators who generated the data powering such models. Shuttershock, who provided images to OpenAI in the creation of image generation model DALL-E are also facing the question of how to properly compensate its artists. Will new license standards need to be created the fair use of data when incorporated into generative ML models?

Humanoid robot Ai-Da speaks at House of Lords meeting

What: A humanoid robot, Ai-Da, spoke at a committee hearing in the UK's House of Lords (the second chamber of the UK government). The humanoid robot has the ability to interact via audio and speech and was primarily designed to create art.

Under the hood, the system has different ML algorithms for each of its functions (speaking, writing, drawings and paintings), so this is not an all-encompassing single model. However, the combination of functionality under one system is impressive none-the-less.

Key Takeaway: The proceedings began by disclaiming that the robot is not in itself an entity and the creator is ultimately responsible for the outputs created. Certainly, at this stage of AI development this represents a significant landmark in the discussion around liability and accountability as it relates to AI systems and their creators.

Live clip of humanoid robot speaking at the house of lords meeting

AI Ethics & 4 good

🚀 US Eyes Expanding China Tech Ban to Quantum Computing and AI

🚀 White House AI Bill of Rights Is All Wrong, Says Center for Data Innovation

🚀 FDA clears noninvasive AI-powered coronary anatomy, plaque analyses

Other interesting reads

🚀 Deloitte’s State of AI in the Enterprise report

🚀 Google in talks to invest $200m in natural language startup Cohere

🚀Shuttershock partners with OpenAI and leads the way to bring AI generated content to all

🚀 Generally Intelligent secures cash from OpenAI vets to build capable AI systems

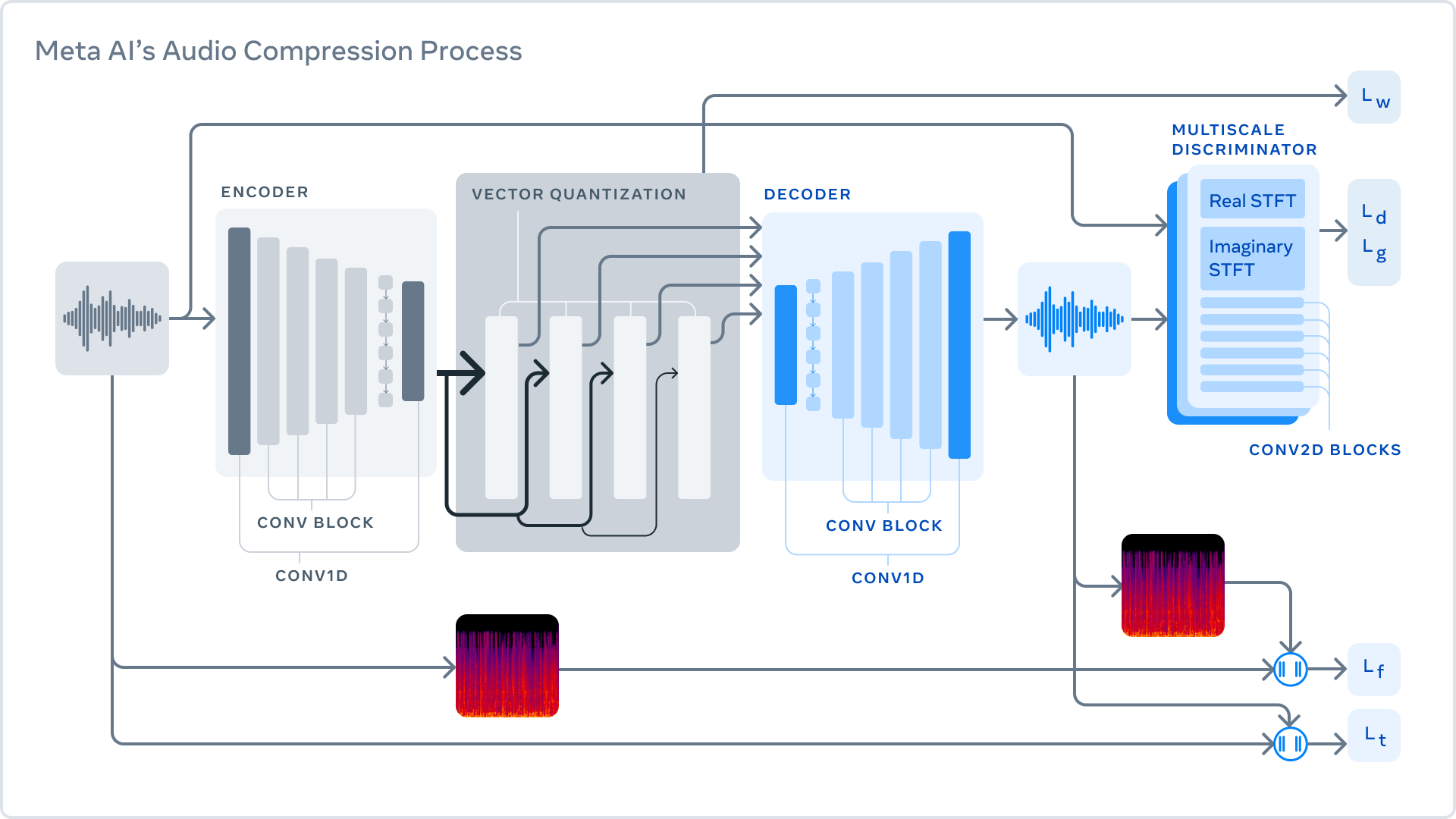

🚀 Using AI to compress audio files for quick and easy sharing

Cool companies found this week

Health

BioBeat - Biobeat’s solution uses health-AI and ML on big-data in order to provide actionable insights on patient care. More than just analyzing the data, Biobeat also generates it, using their proprietary sensor for continuous monitoring of vital signs unique to Biobeat. See an analysis here

HeartFlow - Non-invasive personalized cardiac tests providing visualization of each patient’s coronary arteries, enabling physicians to create more effective treatment plans for their patients.

AI Research

Generally intelligent - Their mission is to unlock human creativity and insight by developing general-purpose agents with human-like intelligence that can be safely deployed in the real world.

Carper AI - Spun out of EleutherAI, CarperAI is doing human preference learning at scale via a representation learning + RL approach.

They're building large-scale, natural text personalized preference models.

AI Art

Ai-Da - "The world’s first ultra-realistic

humanoid robot artist". The robot draws and paints using cameras in its eyes, AI algorithms, and a robotic arm.

...and Finally

Sketch by Github copilot investigation

AI/ML must knows

Foundation Models - any model trained on broad data at scale that can be fine-tuned to a wide range of downstream tasks. Examples include BERT and GPT-3. (See also Transfer Learning)

Few shot learning - Supervised learning using only a small dataset to master the task.

Transfer Learning - Reusing parts or all of a model designed for one task on a new task with the aim of reducing training time and improving performance.

Generative adversarial network - Generative models that create new data instances that resemble your training data. They can be used to generate fake images.

Deep Learning - Deep learning is a form of machine learning based on artificial neural networks.

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £1/month!