Welcome to Nural's newsletter where you will find a compilation of articles, news and cool companies, all focusing on how AI is being used to tackle global grand challenges.

Our aim is to make sure that you are always up to date with the most important developments in this fast-moving field.

We have a slightly longer edition for you this week and make sure to check out Nural's recent data-centric project!

Packed inside we have

- Google's DeepMind predicting gene expression with AI

- Facebook's next step towards the metaverse

- Microsoft and NVIDIA lay claim to world's largest language model

- plus, LinkedIn championing responsible AI

If you would like to support our continued work from £1 then click here!

Graham Lane & Marcel Hedman

Key Recent Developments

Google DeepMind predicting gene expression with AI

What: The human genome is made up of roughly 3 billion “letters”. Approximately 2% of these are genes that perform various essential functions in cells. The remaining 98% are called “non-coding” and contain less well understood instructions about when and where genes should be produced or expressed in the human body. How this takes place is a major unsolved problem. New research by DeepMind claims substantially improved gene expression predictions from DNA sequences through the use of a new AI deep learning architecture.

Key Takeaways: Developments in this area will have critical downstream applications in human genetics. For example, complex genetic diseases are often associated with variants in the non-coding DNA that potentially alter gene expression.

Paper: Effective gene expression prediction from sequence by integrating long-range interaction

Code: deepmind-research

Facebook is researching AI systems that see, hear, and remember everything you do

What: Facebook have launched an ambitious research project called Ego4D creating a large collection of first person (“egocentric”) videos of people completing everyday tasks that will ultimately be used to train augmented reality systems. Most current image datasets consist of third person views leading to vision systems struggling to understand first person views. Eventual new technology could be included in wearable cameras and home assistant robots helping find lost items, monitoring social interactions, providing real-time assistance learning or undertaking everyday tasks.

Key Takeaways: This new development might exacerbate the trust issues that Facebook is currently facing. A key recommendation for AI development is that an ethical risk assessment should be built in at project inception but that appears not to be the case for Ego4D.

How AI can fight human trafficking

What: Traffic Jam is a new AI system “to find missing persons, stop human trafficking and fight organized crime.” It combs through online ads in speciality “hot spots” (commercial adult services) and searches for “vulnerability indicators.” These can include images of subjects who look like children and indications of drug use. The intention is to aid law enforcement.

Key Takeaways: This provides an example of the legal and ethical dilemmas in this area. Efforts to help victims of trafficking are laudable. However, there are similarities with another company, Clearview, that secretly scraped 10 billion images of faces from the web. Subsequent use by law enforcement and private companies has provoked a storm of controversy.

LinkedIn’s approach to building transparent and explainable AI systems

What: LinkedIn provides details about its recently launched Responsible AI program. This involves both building products and programs that empower individuals regardless of their background or social status and also ensuring that systems are transparent. Predictive machine learning models are widely used at LinkedIn. The company developed a customer-facing model explainer system that provides understandable interpretations and the rationale behind predictions.

Key Takeaways: Facebook has recently faced criticism during testimony to a Senate sub-committee by a whistle-blower. A key allegation is that changes to the Facebook newsfeed recommender system in 2018 increased, rather than reduced, divisive content but this was not addressed. However, the LinkedIn Responsible AI program provides an example that a corporation can take active steps to operate transparently, when motivated.

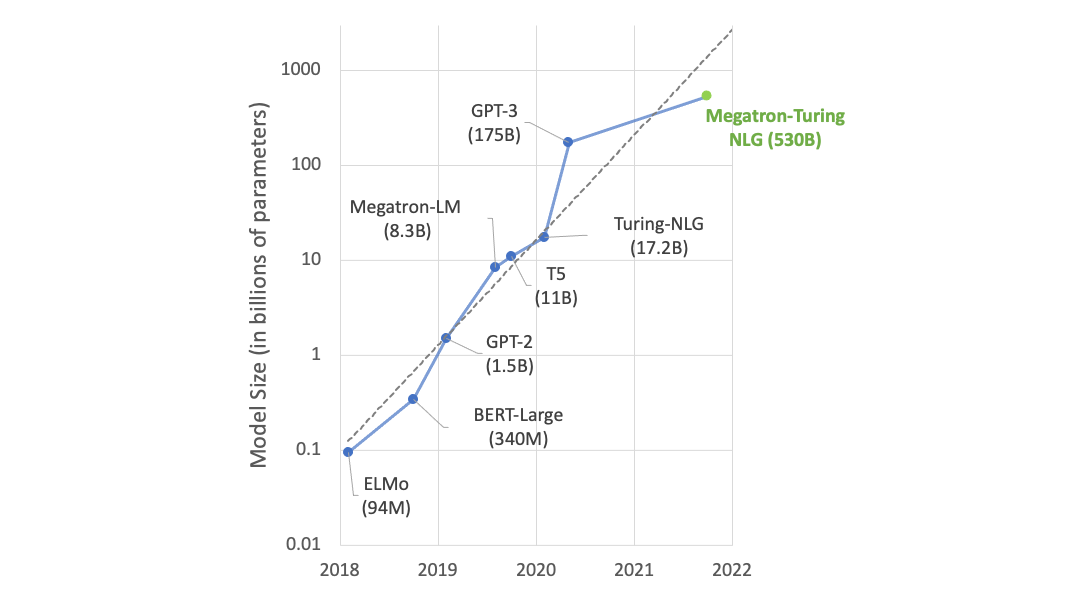

Microsoft and NVIDIA claim the world’s largest and most powerful AI language model

What: Microsoft and NVIDIA have produced what they claim is the largest and the most powerful transformer language model trained to date with 530 billion parameters. The system advances the state of the art in AI for many natural language tasks. By comparison the well-known GPT-3 system consists of 175 billion parameters.

Key Takeaway: AI models continue increasing in size and continue to achieve ever better results. But this comes at a huge financial and environmental cost (in terms of carbon emissions). There are concerns that this further strengthens Big Tech, locking out smaller companies and stifling scientific research. Consequently, work on other avenues of improvement, such as improved data quality and technical enhancements to models, is a growing area of research.

AI Ethics

🚀 Driving AI innovation in tandem with regulation

Explores the proposition that “difficult” new regulations around the use of high-risk AI could spur AI innovation in the EU.

🚀 MEPs back AI mass surveillance ban for the EU

The non-binding resolution also calls for a moratorium on the deployment of predictive policing software.

🚀 How to prioritise humans in AI design for business

Proposes unbiasing (biased) human beings; data for good instead of data for bias; and educating citizens

🚀 Duke computer scientist wins $1 million AI Prize, a 'new Nobel'

Cynthia Rudin wins prize for her work on “interpretable” AI in sensitive areas such as social justice and medical diagnosis.

Other interesting reads

🚀 Protein complex prediction with AlphaFold-Multimer

This pre-print article describes extending DeepMind AlphaFold predictions from single proteins to multi-chain protein complexes.

🚀 11 examples Of AI climate change solutions for zero carbon

A list of global startups applying AI to climate change challenges.

🚀 Google is about to get better at understanding complex questions

Google is applying new Multitask Unified Model AI to improve Google Search, and implementing the new T5 language model.

🚀 Self-supervised learning advances medical image classification

Google employs “contrastive learning” to generate additional, robust training data without requiring additional labelled images.

🚀 IBM says AI can help track carbon pollution across vast supply chains

A new AI suite streamlines carbon footprint analysis across the supply chain and highlights risks arising from climate change.

Cool companies found this week

Duality - technology to apply AI on sensitive data without ever decrypting has received $30 million in series B funding.

Gretel - offers privacy-by-design tools and has raised $50 million in series B funding.

Neural Magic - - provides AI software designed to run on Internet of Things devices gained $30 million in series A investment.

A flying robot that can skateboard – with killer high heels

AI/ML must knows

Foundation Models - any model trained on broad data at scale that can be fine-tuned to a wide range of downstream tasks. Examples include BERT and GPT-3. (See also Transfer Learning)

Few shot learning - Supervised learning using only a small dataset to master the task.

Transfer Learning - Reusing parts or all of a model designed for one task on a new task with the aim of reducing training time and improving performance.

Generative adversarial network - Generative models that create new data instances that resemble your training data. They can be used to generate fake images.

Deep Learning - Deep learning is a form of machine learning based on artificial neural networks.

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £1/month!