Welcome to Nural's newsletter where you will find a compilation of articles, news and cool companies, all focusing on how AI is being used to tackle global grand challenges.

Our aim is to make sure that you are always up to date with the most important developments in this fast-moving field.

Packed inside we have

- Microsoft open-sources "mixture of experts" library to train huge AI models

- New approach helps deep neural networks understand the relationships between objects

- and ML used to discover new protein communities in human cells

If you would like to support our continued work from £1 then click here!

Graham Lane & Marcel Hedman

Key Recent Developments

Microsoft open-sources "Mixture of Experts" library

What: Microsoft have open-sourced a library called Tutel which implements a “mixture of experts” (MoE) architecture for training huge (> 1 trillion parameter) deep neural networks. Different “experts” are used to train different aspects of the network and the results are aggregated into a single model. This is more efficient that monolithic approaches.

Key Takeaways: Traditional training is linear, meaning that the resources required scale up with the number of parameters. This is unsustainable as models become huge. MoE is sub-linear meaning that huge models can be trained with fewer resources. Facebook/Meta and the Bejing Academy of AI have also open-sourced MoE libraries. Despite the astounding progress of deep learning, active research is still yielding innovations at pace.

Microsoft Research blog

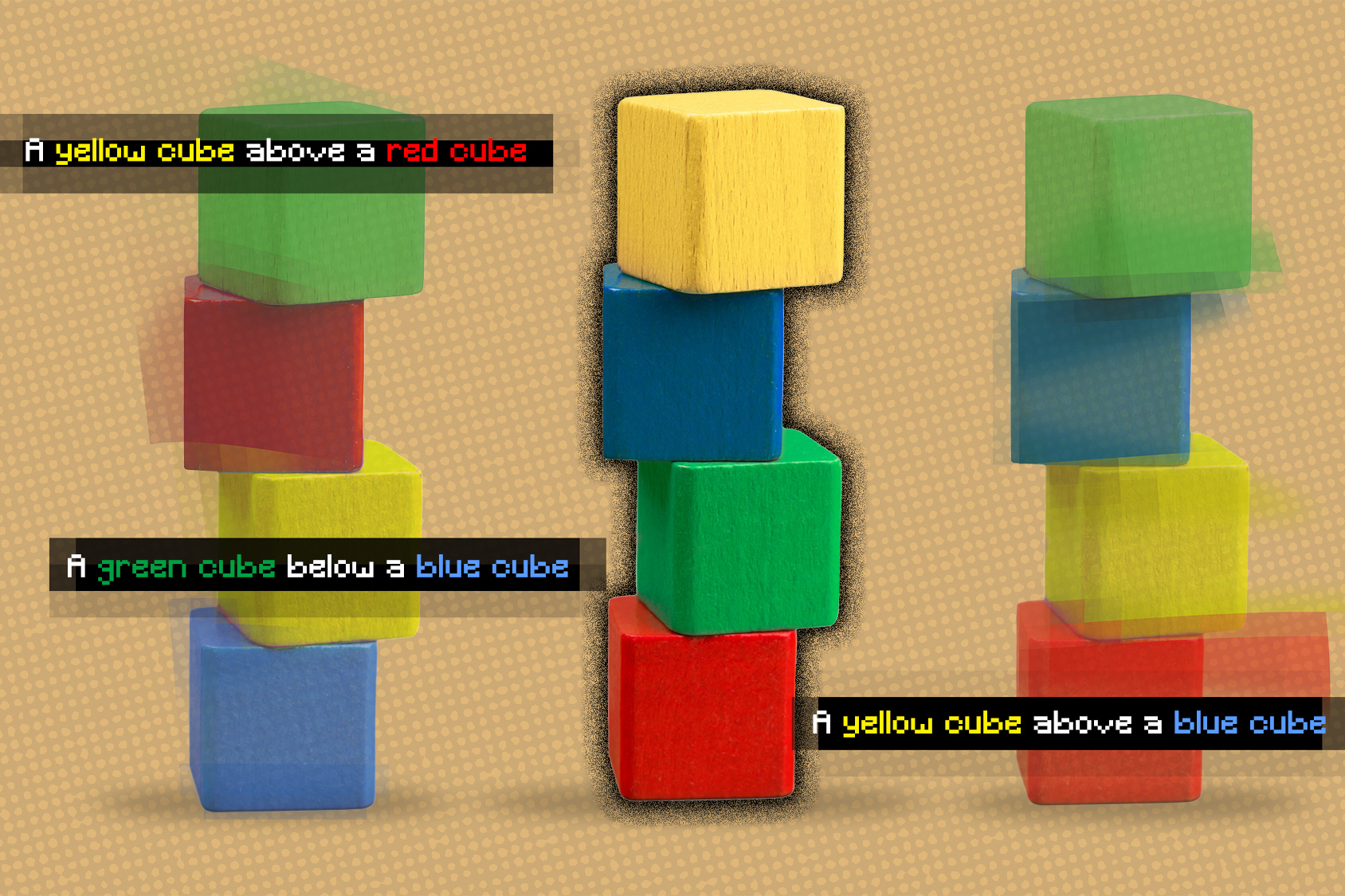

Artificial Intelligence that understands object relationships

What: Deep learning models do not understand relationships between objects in a scene. For example, a kitchen robot would have difficulty following a command like “pick up the spatula to the left of the stove and put it on top of the cutting board”. Researchers have now developed a machine learning model that understands the underlying relationships between objects, and can also generate images of scenes from text descriptions.

Key Takeaways: Developing visual representations dealing with the compositional nature of the world has been described as “one of the key open problems in computer vision” and the research makes a significant contribution to this area.

Paper: Learning to Compose Visual Relations (the paper includes some informative animations)

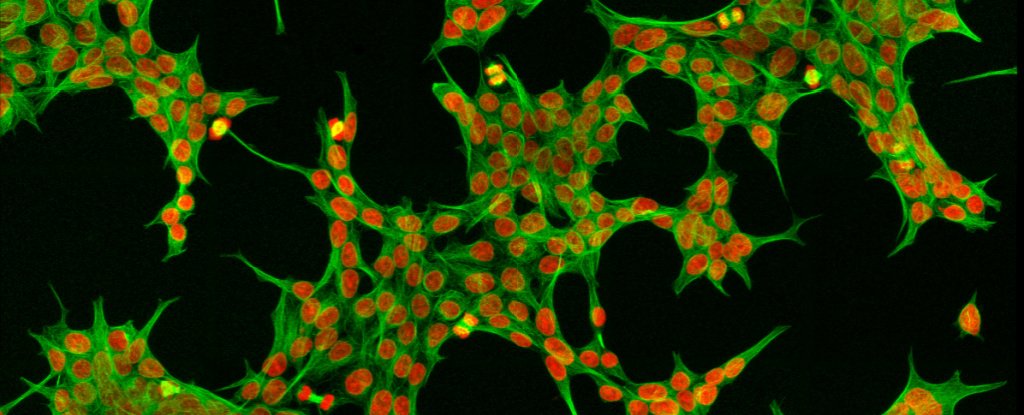

AI map suggests we've not even discovered half of what's inside our cells

What: Machine learning has been applied to create a unique multi-scale, unified hierarchical map of human cell architecture at 4 layers of magnitude from nanometer to micron-scale. The goal was to identify communities of proteins, called assemblies, that co-exist in cells at different scales. According to the authors 69 subcellular systems were identified, of which approximately half were undocumented. Machine learning was used to integrate information from different sources at different scales into the unified model.

Key Takeaways: According to News Medical, the study identified new protein communities involved in the production of ribosomes, which are nanomachines essential to the survival of cells. Excessive ribosome production is associated with cancer whereas insufficient production may lead to developmental diseases.

Paper: A multi-scale map of cell structure fusing protein images and interactions

AI Ethics

🚀 AI incident database of AI harms realised in the real world

A dashboard of AI harms realised in the real world is being developed, inspired by similar databases in the aviation industry.

🚀 UNESCO recommendations point to need for AI ethics guidelines

UNESCO joins other bodies concerned about facial recognition in public spaces and "social credit scoring".

🚀 DWP urged to reveal algorithm that ‘targets’ disabled for benefit fraud

Disability groups express concern about Department of Work and Pensions' algorithm targeting benefit fraud.

Other interesting reads

🚀 With the Metaverse on the way, an AI bill of rights is urgent

“The problem of humanity is the following: We have paleolithic emotions; medieval institutions; and God-like technology."

🚀 Towards low-cost and efficient malaria detection

Researchers have developed a large-scale dataset of images to promote machine learning models to help doctors when screening slides.

🚀 Nvidia’s latest AI tech translates text into landscape images

The API generates landscapes in response to text descriptions and updates the image as new text is added.

Nvidia API

Cool companies found this week

Health

LifeVoxel.ai - is developing a zero latency medical imaging SaaS platform and has raised $5 million in seed funding

Technology

Jina.ai - uses open source neural search to find information in unstructured data (including videos and images) and has raised $30 million in Round A funding

Technology

CatalyzeX - provides a platform to discover AI models and code to kick-start new projects and has raised $1.6 million in seed funding

And finally ...

Virtual real estate sells for record $2.4m in Decentraland metaverse

AI/ML must knows

Mixture of Experts - architecture in which sub-models specialized in different tasks are used inside a huge model (> 1 trillion parameters) in order to reduce the training overhead

Foundation Models - any model trained on broad data at scale that can be fine-tuned to a wide range of downstream tasks.

Few shot learning - Supervised learning using only a small dataset to master the task.

Transfer Learning - Reusing parts or all of a model designed for one task on a new task with the aim of reducing training time and improving performance.

Generative adversarial network - Generative models that create new data instances that resemble your training data. They can be used to generate fake images.

Deep Learning - Deep learning is a form of machine learning based on artificial neural networks.

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £1/month!