Welcome to Nural's newsletter where you will find a compilation of articles, news and cool companies, all focusing on how AI is being used to tackle global grand challenges.

Our aim is to make sure that you are always up to date with the most important developments in this fast-moving field.

We now have Jobs section currently featuring an exciting data scientist role at startup AxionRay

Reach out to advertise your own tech roles!

Packed inside we have

- Goodness gracious, great balls of fire! DeepMind 'sculpts' nuclear plasma.

- Google addresses the sustainability of machine learning training

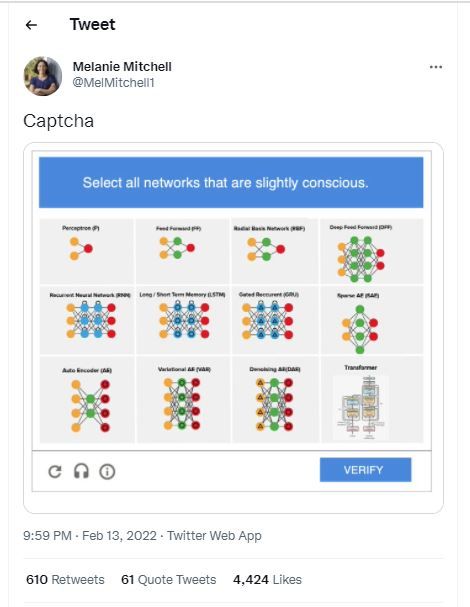

- and OpenAI provokes a mini Twitter storm by speculating whether large neural networks are 'slightly conscious'.

If you would like to support our continued work from £1 then click here!

Graham Lane & Marcel Hedman

Key Recent Developments

DeepMind accelerates fusion science through learned plasma control

What: DeepMind collaborated with the Swiss Plasma Center to develop an ML system that can control and sculpt the plasma in a nuclear fusion reactor (called a tokamak). The plasma, which is inherently unstable, is controlled by complex array of magnetic coils. The voltage of the coils is adjusted thousands of times per second to ensure that the plasma does not touch the walls of the container. Existing systems control each coil separately whereas DeepMind developed a single deep reinforcement learning network to control all of the coils at once, automatically learning which voltages are the best to achieve a plasma configuration directly from sensors.

Key Takeaways: The work provides another example of AI experts successfully collaborating with research scientists to advance domain knowledge. In the past two years, DeepMind has applied machine learning expertise to accelerate scientific progress in a range of different areas including quantum chemistry, pure mathematics, material design, weather forecasting, computer coding and, now, physics.

Paper: Magnetic control of tokamak plasmas through deep reinforcement learning

Good news from Google about the carbon footprint of machine learning training

What: Google researchers investigated ways to reduce the carbon footprint of training machine learning models and identified 4 steps that have a large impact. They recommend using: efficient ML model architectures; processors optimised for ML training; cloud computing; and choosing green cloud data centres. They estimate that these four practices together can reduce energy by 100x and emissions by 1000x.

Key Takeaways: Cost and lack of sustainability have emerged as major concerns undermining claims that AI and ML can play a role in addressing grand challenges. It is encouraging that detailed research is being carried out to understand this problem and develop ways to address it.

The paper adds weight to the view that the full lifecycle costs of IT systems including the embedded carbon in manufacturing chips and building data centres will be a bigger challenge than simply the operational cost of ML training.

Paper: The carbon footprint of machine learning training will plateau, then shrink

Selected highlights from Climate Change AI

Climate Change AI virtual happy hour next on Wed 2 Mar (first and third Wednesday of each month)

Meetup: Can AI help us better project climate change? on Wed 9 Mar

Data science challenges:

Turtle Recall: build a machine learning model to identify individual sea turtles

Track particulate matter and air quality in a NASA competition

Climate Hack: apply cutting-edge ML to develop satellite imagery prediction for use in solar photovoltaic output forecasts.

AI Ethics

🚀 Meta’s RSC supercomputer brings revolutionary power — and privacy and bias concerns

An in-depth analysis of Meta’s new AI supercomputer and metaverse aspirations, examining the steps taken to ensure the system operates in an ethical manner and the concerns that remain.

🚀 Could ‘expiration dates’ for AI systems help prevent bias?

A proposal - inspired by food labels – to give AI models an “expiration date” at which point they should be retired rather than making out-of-date predictions.

🚀 Applying the Trustworthy Artificial Intelligence Implementation (TAII) Framework on Tesla Bot

In 2021, Elon Musk announced the development of a humanoid robot. As a case study, the paper applies the Trustworthy Artificial Intelligence Implementation (TAII) Framework to the proposal and asks: Can Tesla implement trustworthy AI?

Other interesting reads

🚀 How AI is Changing Chemical Discovery

Further to recent news items about how ML is being applied to the discovery of new materials in various fields, this article provides a detailed overview of the current state of the art.

🚀 AI experts develop big data approach for wildlife preservation

A group of AI and animal ecology experts have developed a new big data approach to enhance research on wildlife species and improve wildlife preservation.

🚀 Mapping the world's fungal networks with machine learning

The aim of the project is to utilise ML to predict regions with high fungal diversity and characterise those at threat from land-use changes.

🚀 Rising popularity of VR headsets sparks 31% rise in insurance claims

"Welcome to my Metaverse!".

Oops.

Crash.

Jobs

Data scientist - AxionRay

Axion are looking to hire a talented NLP DS lead as they enter hypergrowth. Axion is a stealth AI decision intelligence platform start-up working with electric vehicle engineering leaders to accelerate development, funded by top VCs.

Comp: $100k – $180k, meaningful equity!

If interested contact: marcel.hedman@axionray.com

Cool companies found this week

Clean energy

KoBald Metals - applies AI to find the materials critical for electric vehicles and renewable energy. The company is backed by Bill Gates and Jeff Bezos amongst others. It has raised $192.5 million in round B funding.

Healthcare

Scopio - provides an end-to-end platform carrying out a common blood test that is currently done by humans. The system supports full remote control, plus AI analysis for pre-classification, detection, and quantification of blood cells. The company has secured $50 million in round C funding.

AI development

New York-based Spell and Bristol-based Graphcore have announced a partnership to design and build “the next generation of AI infrastructure”. They are developing a new AI hardware-software package combining specialist processing units with a hardware agnostic ML software platform for deep learning in order to make AI development faster, easier and less expensive.

And finally ..

AI/ML must knows

Foundation Models - any model trained on broad data at scale that can be fine-tuned to a wide range of downstream tasks. Examples include BERT and GPT-3. (See also Transfer Learning)

Few shot learning - Supervised learning using only a small dataset to master the task.

Transfer Learning - Reusing parts or all of a model designed for one task on a new task with the aim of reducing training time and improving performance.

Generative adversarial network - Generative models that create new data instances that resemble your training data. They can be used to generate fake images.

Deep Learning - Deep learning is a form of machine learning based on artificial neural networks.

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £1/month!