Welcome to Nural's newsletter where you will find a compilation of articles, news and cool companies, all focusing on how AI is being used to tackle global grand challenges.

Our aim is to make sure that you are always up to date with the most important developments in this fast-moving field.

Packed inside we have

- Meta gains kudos for work on ML transparency;

- Business perspective on AI-based climate tech;

- and, Do robots have to look like people?

If you would like to support our continued work from £1 then click here!

Graham Lane & Marcel Hedman

Key Recent Developments

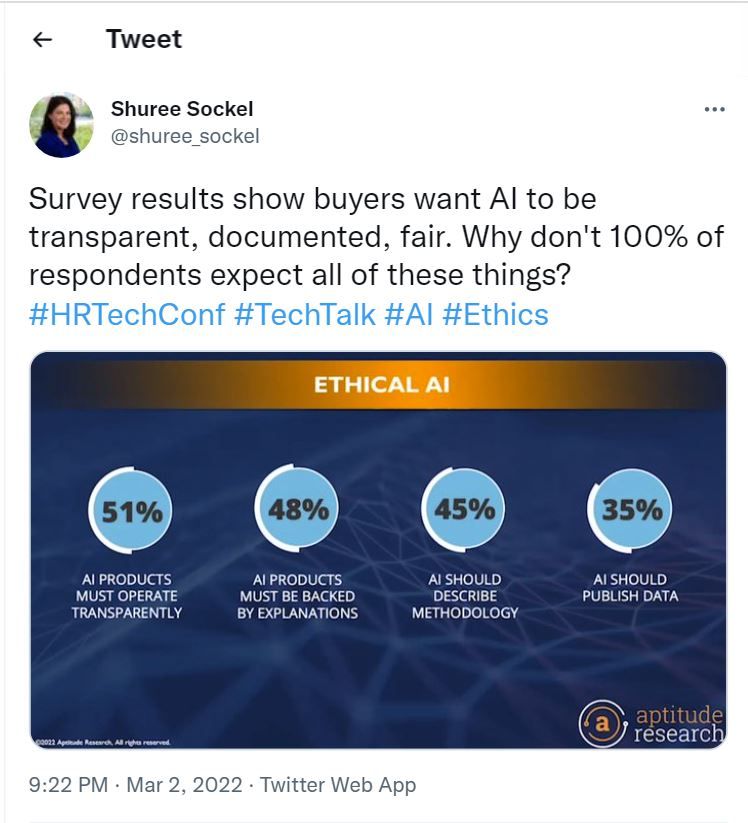

Meta’s new ‘system cards’ make Instagram’s AI algorithm a little less mysterious

What: In the course of a recent major event called “Building the metaverse with AI”, Meta (parent company of Facebook and Instagram) also introduced a new AI explanatory tool called “system cards”. The new approach seeks to provide a better overview of how the overall system operates, rather than focusing on the details of machine learning model. The Instagram feed is presented as an example with an explanation about when and how posts that breach community guidelines are removed, and when misinformation is identified and eliminated.

Key Takeaways: The Montreal AI Ethics Institute is a highly regarded research and activist centre that is quick to call out Big Tech if it falls below appropriate standards. In this case, the Institute was positive about this development appreciating “the more holistic take that System Cards take compared to the narrower scope of other mechanisms that seek to imbue explicability”

Paper: System-Level Transparency of Machine Learning

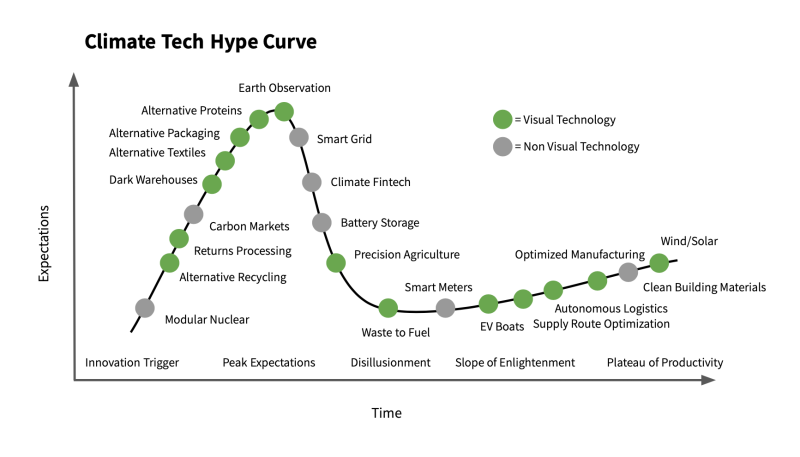

How visual data is propelling a new wave of climate tech

What: The article provides a business perspective on the development of AI-based climate tech, covering 3 areas. First, advances in visual data collection enable the monitoring of both land usage and water resources. Second, molecular imaging and design will make food and materials more environmentally friendly, such as lab-grown meat. Finally, autonomous robotics will support developments in several areas. This includes real-time, plant-based agriculture with new weeding systems that reduce the need for pesticides. Another example is robotic automation allowing the development of “dark warehouses” decreasing energy consumption and land usage.

Key Takeaway: It is disconcerting to notice an ever-increasing prominence of “risk measurement, and mitigation strategies for future catastrophes” compared to strategies for addressing climate change.

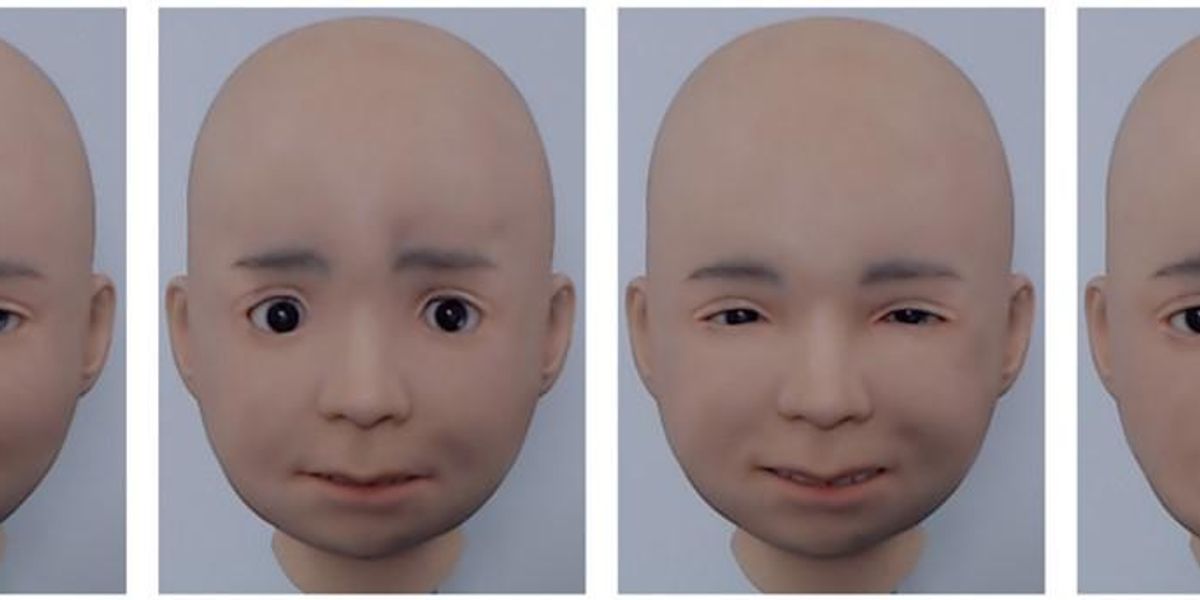

I am still not convinced that we need Androids

What: The article makes a key point regarding human-computer interactions, namely that assessing the performance of a humanoid robot against humans will "doom your robot to failure”. A book called “The case against tomorrow's robots looking like people” adds that humans may be less inclined to explore and use advanced functionality of a robot that looks like a person.

Key Takeaways: In August 2021 Elon Musk announced the development of a humanoid robot called Tesla Bot. In fact, the optimal manner in which humans will interact with advanced AI is an open question. There is a lot of focus on virtual teammates. For example, this week’s Cool Companies section features 3 startups working with avatars and “virtual humans”. Also, Work Fusion that has launched AI tools that automate daily business tasks using humanoid personas. However, the main linked article questions whether this is really the right approach to develop a robust relationship with advanced AI.

AI Ethics

🚀 Europe is in danger of using the wrong definition of AI

It may seem geeky, but the definition of "Artificial Intelligence" will be central to the scope of the forthcoming EU AI Act, in particular the balance between promoting business and innovation vs protecting citizens' right. This article provides one side of the argument.

🚀 EU must ban predictive AI systems in policing and criminal justice

Fair Trials, European Digital Rights and 41 other European organisations issued a collective statement calling on the EU to ban predictive policing systems in the AI Act.

🚀 The pitfalls of AI that could predict the outcome of court cases

An AI system generates recommendations for corporate litigation, like whether to settle and which claims to assert, where to file or defend a lawsuit, and which attorneys and law firms might provide the best chance of success.

🚀 Affirmations with Moxie

Is it me, or is it really creepy to encourage your child to repeat platitudes recited to it by a robot that has been developed by a tech company?

Other interesting reads

🚀 Injecting fairness into machine-learning models

A new technique boosts models’ ability to reduce bias, even if the dataset used to train the model is unbalanced.

🚀 PolyCoder is an open source AI code-generator that researchers claim trumps Codex

Researchers develop an open source code generator that can write in C with greater accuracy than much larger, proprietary models from Big Tech.

🚀 A data-driven approach to understanding how the brain works

A research team grouped mental function terms used in fMRI research papers to specific brain circuits in fMRI brain images, yielding six functional domains that differ from the conventional classification.

🚀 Grunt of the litter: scientists use AI to decode pig calls

ML does the grunt work in analysing pig sounds.

Cool companies found this week

Avatars and virtual beings

Neosapience - is a Korean startup that has developed an AI-powered synthetic voice and video platform that lets users turn text into video without recording or editing in a studio. The company has raised $21.5 million in round B funding.

soul machines - develops virtual humans using AI-driven synthetic voices and visuals. You can talk to one of the virtual humans on their web page. The company has raised $70 million in round B funding.

BuzzAR - has recently launched CryptoToon, a platform enabling users to create, own and trade avatars using Non-Fungible Tokens (NFTs). The company is reportedly Southeast Asia’s first woman-led metaverse startup and has raised $3.8 million in seed funding.

And Finally ...

AI/ML must knows

Foundation Models - any model trained on broad data at scale that can be fine-tuned to a wide range of downstream tasks. Examples include BERT and GPT-3. (See also Transfer Learning)

Few shot learning - Supervised learning using only a small dataset to master the task.

Transfer Learning - Reusing parts or all of a model designed for one task on a new task with the aim of reducing training time and improving performance.

Generative adversarial network - Generative models that create new data instances that resemble your training data. They can be used to generate fake images.

Deep Learning - Deep learning is a form of machine learning based on artificial neural networks.

Best,

Marcel Hedman

Nural Research Founder

www.nural.cc

If this has been interesting, share it with a friend who will find it equally valuable. If you are not already a subscriber, then subscribe here.

If you are enjoying this content and would like to support the work financially then you can amend your plan here from £1/month!